Adaptive AI: How Real-Time Model Switching Unlocks Smarter Applications

With dozens of new foundation models and APIs emerging every month, businesses face a tough challenge: how do you pick the right one at the moment?

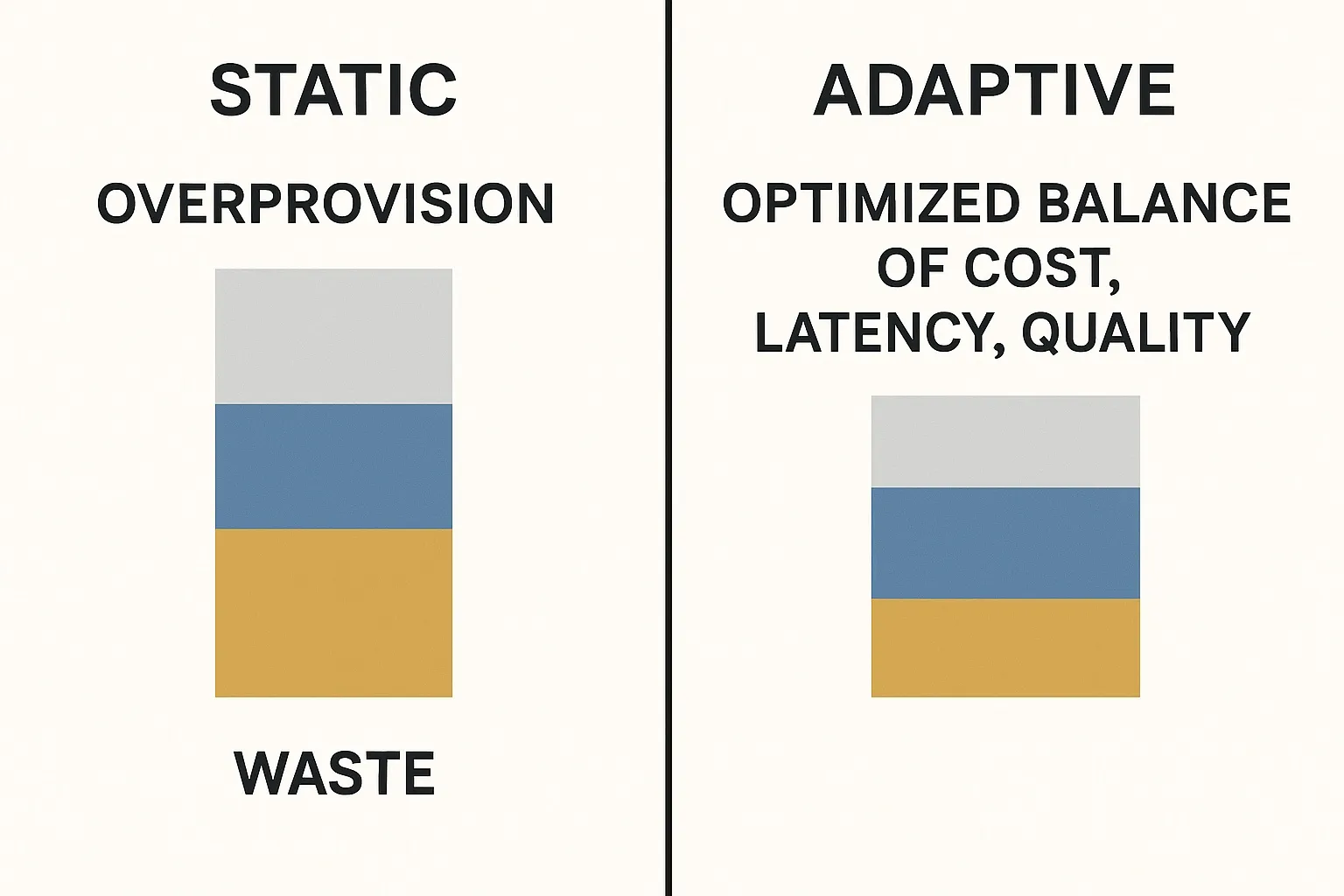

Choosing incorrectly means wasted resources, slower responses, or poor results.

That’s where adaptive AI infrastructure comes in. In practice, adaptive routing can reduce latency by 20–30% and lower costs significantly without sacrificing quality.

Most discussions focus on speed or cost but the real game-changer is adaptability.

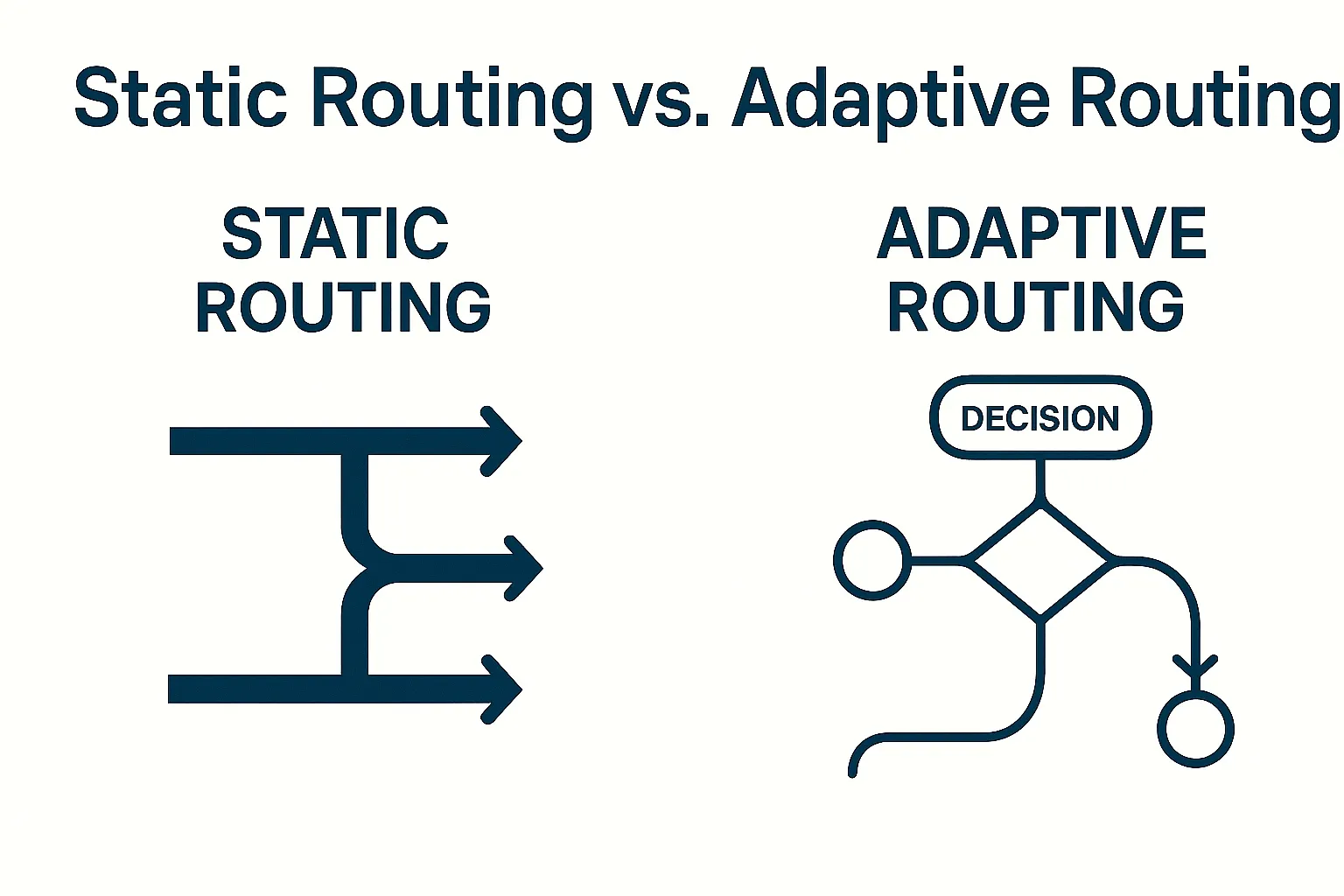

Why Static Routing Falls Short

Traditional model routing works like this:

- Use Model A for cost efficiency

- Use Model B for accuracy

- Use Model C as a fallback

But real-world usage varies wildly:

- A customer chat might need a lightweight instant model

- A legal query needs depth

- A creative query benefits from multiple models

Static logic can't handle this → leads to wasted resources or poor results.

Real-Time Model Selection

Adaptive AI evaluates every query in real time.

Key signals:

- Complexity — simple vs reasoning-heavy

- User intent — speed vs accuracy vs cost

- Model health — latency, errors, uptime

Routing decisions happen in milliseconds but massively improve:

-

Quality

-

Cost efficiency

-

System reliability

Benefits Beyond Speed & Cost

Adaptive routing makes AI more intelligent:

- Higher quality outputs

- Personalized experiences

- Efficient resource allocation

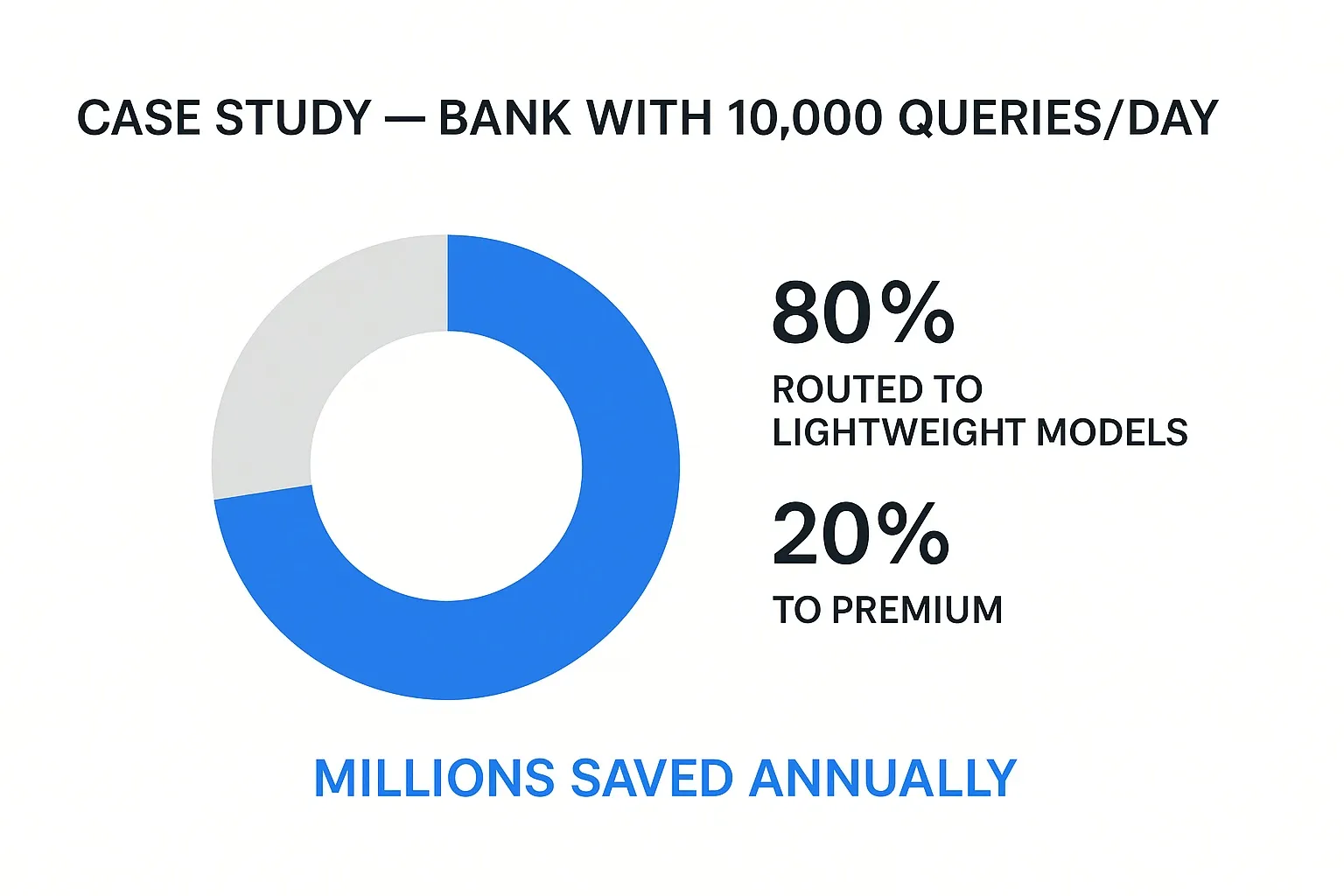

Adaptive AI can reduce cost by up to 40%.

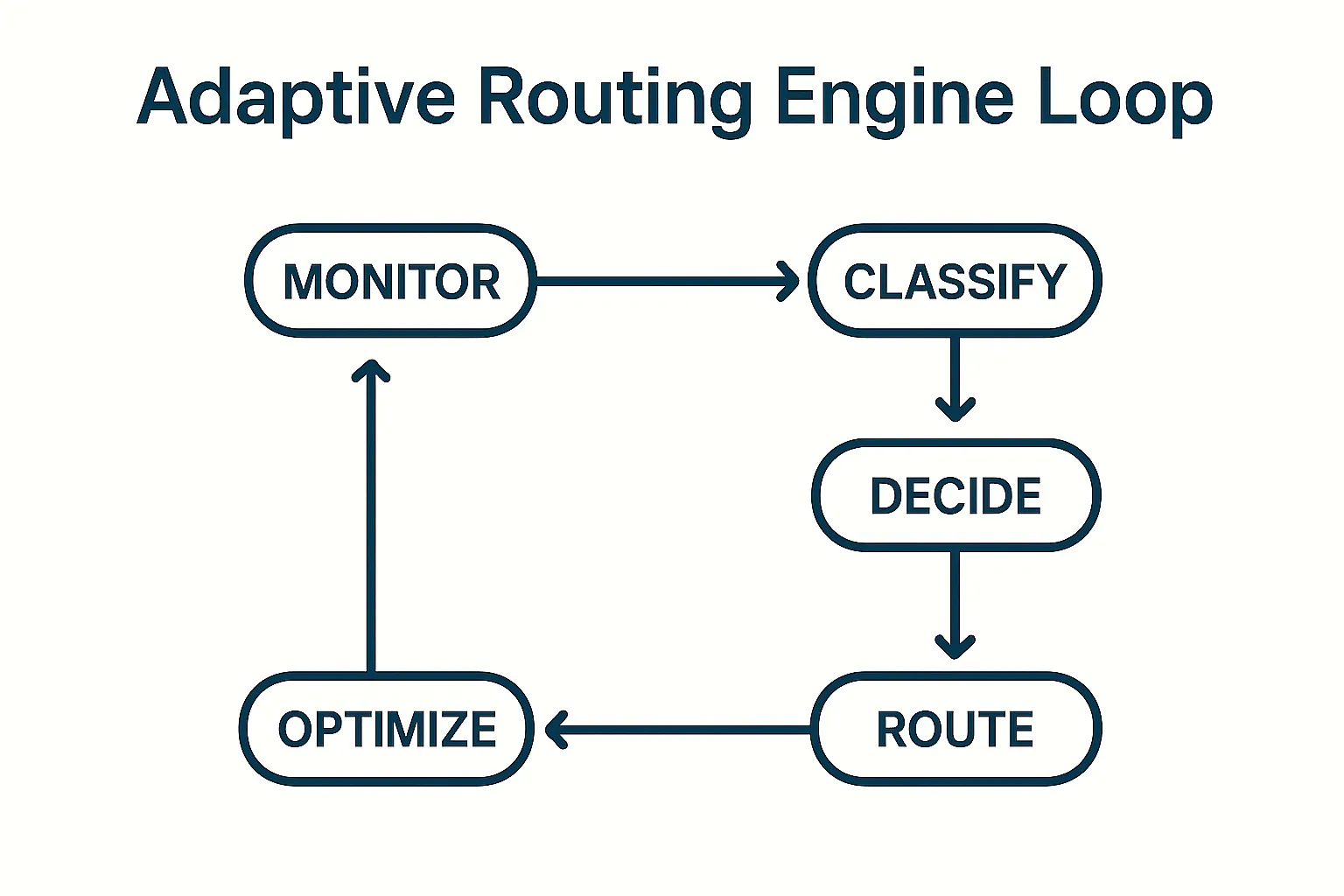

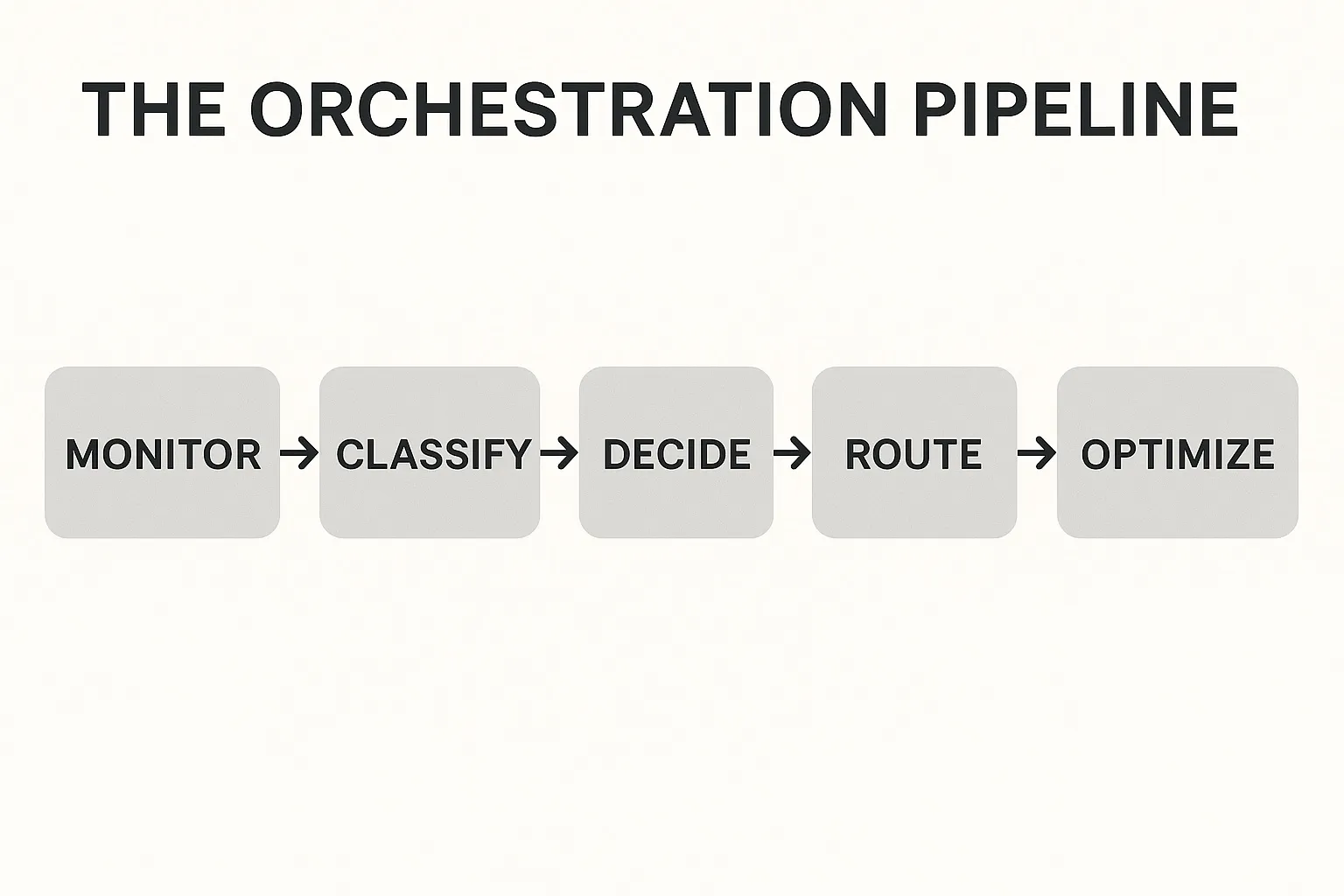

Technical Strategies for Adaptive Routing

Building adaptive AI requires orchestration beyond load balancing:

-

Real-time performance monitoring

-

Query classification using embeddings

-

Decision engines for trade-offs

-

Hybrid orchestration (combine multiple model outputs)

Industry Use Cases

Healthcare

- Fast triage with lightweight models

- Complex diagnostics → high-accuracy models

Finance

- Routine checks → fast models

- Regulatory research → deep reasoning models

Customer Support

-

Common queries handled instantly

-

Escalation path to stronger LLMs

Why It Matters

Static systems fall behind.

Adaptive systems stay:

- Efficient

- Competitive

- Future-proof

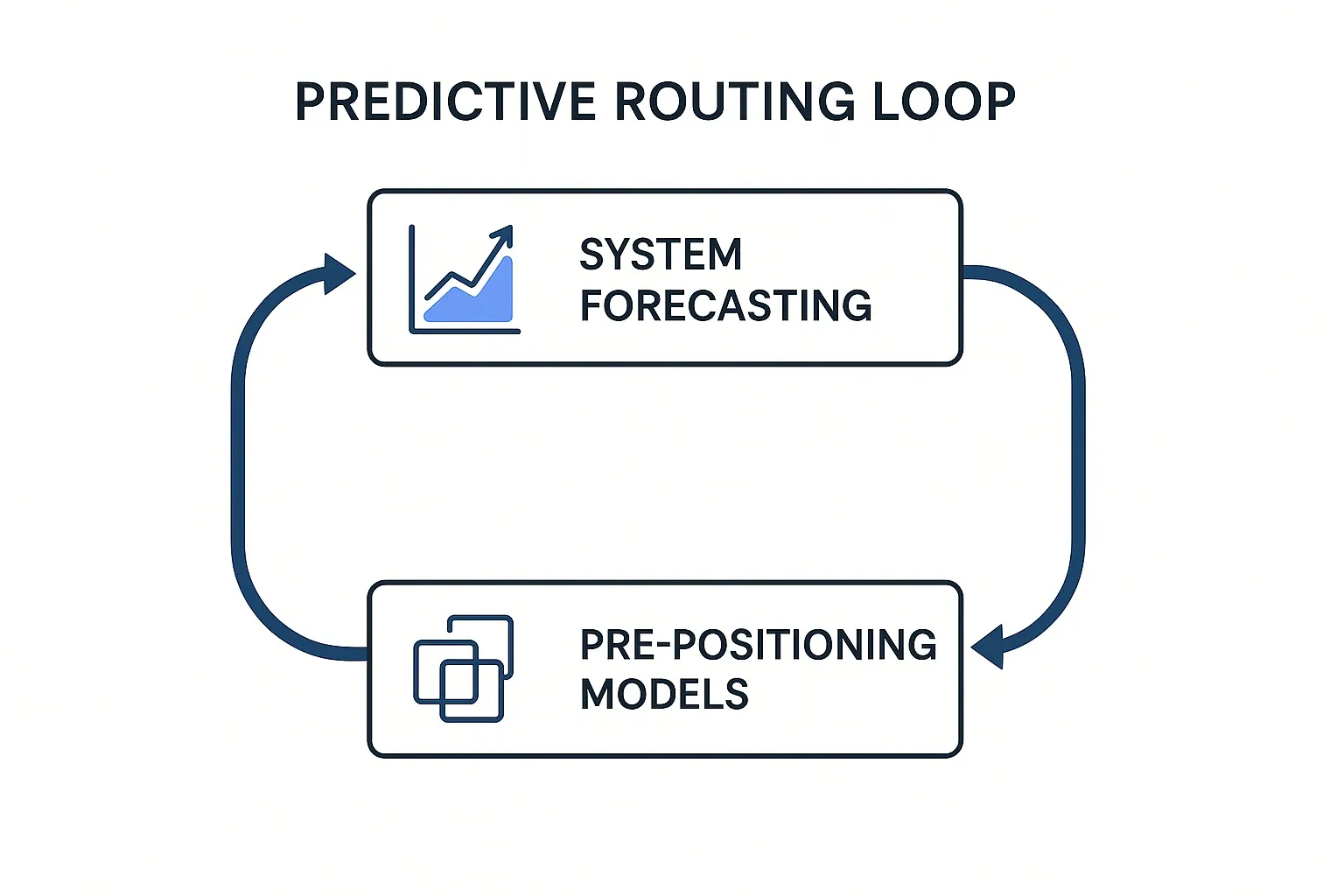

Predictive & Contextual Routing

Next-generation AI systems will:

- Predict traffic slowdowns

- Pre-select optimal models

- Use contextual memory for personalization

Conclusion

Adaptive AI makes applications smarter, faster, and more reliable.

Kumari AI's orchestration:

-

Learns from usage data

-

Tracks real-time model health

-

Classifies queries

-

Selects the best model automatically

As new models emerge, adaptive routing ensures the best model is always selected.

Key Takeaways

-

Static routing wastes resources

-

Adaptive routing improves quality and cost

-

Real-time decisions = better results

-

Future = predictive + contextual model switching

Adaptive AI isn’t just efficient, it makes apps feel intelligent.